There are many myths, misunderstandings, and differing interpretations of how we understand this or that concept, this or that term, or these or that approaches. Test strategy in software testing is one of the most misunderstood concepts. If you search for test strategy online, you can find dozens of the same definitions — often copied from outdated standards written more than a decade ago. The best modern are:

What are test strategies?

Practical test strategy definition:

Rapid software testing — a test strategy is the set of ideas that guides our choices about what testing to do.

The focus lies in:

→ set of ideas

→ guide of choices

In business, strategy is commonly defined as:

HBR on strategy — a strategy is an integrative set of choices that positions you on a playing field of your choice in a way that you win.

The key to definition is

→ an integrative set of choices

ISTQB glossary — a description of how to perform testing to reach test objectives under given circumstances.

This is about contextual application, depending on such factors as risk, industry regulations, safety requirements, and available skills

The variety of perspectives among different QA teams:

- A few are interested in test strategy, but lack the know-how.

- Often, automation, regression or performance testing are in silos

- Others confuse it with a test plan, an automation strategy or a company testing policy.

- The third one does not need it, so it does not even exist.

- Some testing teams treat it as a formal document no one reads.

As a result:

Software test strategy often becomes a buzzword rather than a practical testing tool that helps teams deliver high-quality software.

❌ QA engineers stop seeing real value in test strategies.

❌ Managers reduce strategy to a checkbox activity.

❌ Developing teams jump straight into test plans, automation, regression testing, exploratory testing, API testing or AI tools without understanding why.

— Why test strategy become so confusing?

The problem is not the lack of information. Focusing on templates and documents rather than on decisions and outcomes is wrong.

✅ A test strategy is about choices, not paperwork!

In this article, we’ll break down what a test strategy definition really is, why many teams struggle with it, and how a modern AI test management platform like testomat.io helps turn strategy into live documentation.

Components of a Test Strategy

People often jump straight to developing a strategy. For us, however, it is crucial first to understand which approach to choose and why, and only then determine how we will plan testing efforts based on that.

The foundation of a good strategy — something even McKinsey uses — is the identification of the core problem. While these businesses are not always infallible gurus, the essence remains: at the start, define which problems we are solving. Then, determine how we will achieve the solution and, finally, create an action plan, rather than the other way around.

Applied to Quality Assurance, this means:

- The honest look at the obstacles: Fundamentally, strategy is about risk anticipation. A good strategy requires us to imagine: What are the challenges and risks that matter most to our success? Given the inherent complexity of the system, where are the critical points most likely to fail?

- Test objectives & quality goals: This is a clear, shared understanding of what good enough to release truly means for this specific product. It encompasses the high-level Exit Criteria, Definition of Ready and Definition of Done for testing hand-offs.

- Definition of scope: This component identifies the parts of the system that will be tested and which will be excluded, where testing must focus first, while accounting for our real-world limits regarding time, budget, and environment availability — and why.

A test strategy in software testing is a high-level document that connects the big picture vision to the daily actions of the team. It defines the overall approach, principles and logic flow required to provide stakeholders with the critical information they need to make informed decisions. The flow follows a strategic sequence: Diagnosis (The Problem) → Guiding Policy (The Approach) → Coherent Action (The Plan).

Within this framework, complementary artifacts — such as the Test Plan, Requirements Traceability Matrix (RTM), and Documentation Analysis serve as the foundational tools to ensure complete coverage and transparency of the testing process.

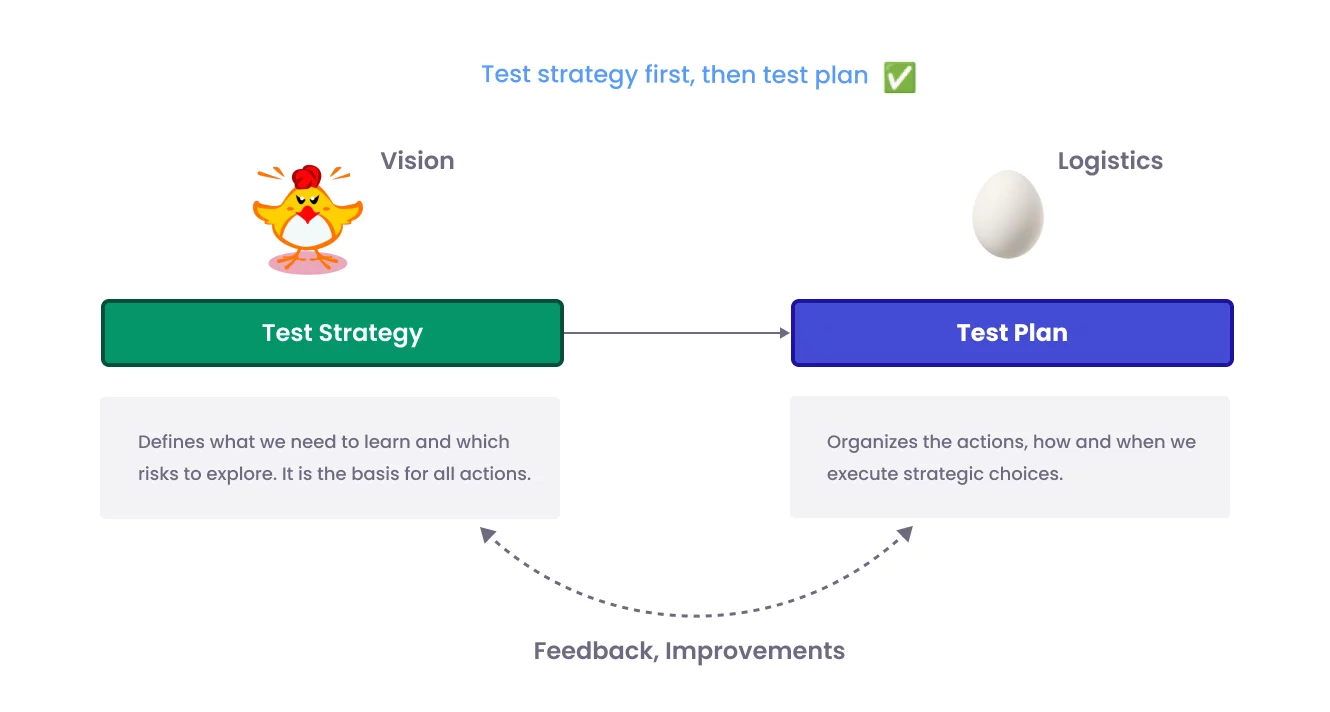

Strategy VS Plan: The “Chicken and the Egg” of Testing

This is a common dilemma in software testing and quality assurance: which should come first — the Test Strategy or the Test Plan? The second common mistake in QA is confusing strategy with planning, even among seasoned professionals. What exactly can we extract from this widely recognized comparison table:

| Test Strategy | Test plan |

| The test strategy is a high-level document that captures the approach we use to test the product and achieve the goals. | Test plan document is a document which contains the steps for all the testing activities to be done to deliver a quality product. |

| Components of the test strategy include Scope and overview, Test Approach, Testing tools, Industry standards to follow, Test deliverables, Testing metrics, Requirement Traceability Matrix, Risk and mitigation, etc., Reporting tool and Test summary. | Components of the test plan include Test Plan Identifier, Features To Be Tested, Features Not To Be Tested, Approach, Pass/Fail Criteria, Suspension Criteria, Test Deliverables, Responsibilities, Staffing and Training Needs, Risks and Contingencies, etc. |

| The project manager develops it. | It is prepared by the test lead or the test manager. |

| It is derived from the Business Requirement Specifications (BRS) | It is derived from the Product Description, SRS. |

| It is defined at the organisation level and can be used for other projects of a similar nature. | It is defined at the project level. |

What is this test plan actually about?

A plan is simply the roadmap for executing our vision. Our vision, however, is defined by our strategy. Strategy is the foundation, even if it feels abstract without a real project context. We must first understand the specific problem we are trying to solve and the methods we will use to solve it. Only then can we create a plan — a list of agreements between the team and the client — to execute that strategy.

The plan implements the strategy!

A detailed Test Plan needs that strategic direction to avoid being chaotic wheel every sprint/release — yet creating a meaningful plan requires understanding the product’s risks, scope, and quality goals (which the strategy helps define).

So, the correct order is go down from the test plan to the test strategy:

- Identify the problem and risks.

- Choose a testing approach (strategy).

- Create a test plan to execute that strategy.

Many think this is a “chicken and the egg” situation, but it is not. A good plan is born from a clear understanding of why a specific strategy was chosen. That is the only way to move forward and effectively solve the testing problems. At the same time, without a plan, we cannot validate whether the strategy actually works in reality.

The Illusion of Testing in Agile

In Agile contexts, Scrum/Kanban, especially AI testing now, this illusion often lies between 2 extremes:

We are Agile, let’s just build something, show it in two weeks, and keep movingso. We do not need heavy documentation — we test continuously! So, development teams skip or keep a very lightweight strategy, just a one-page mention.

Testing teams create full ISTQB-style test plans for each sprint and release, with hundreds of test cases generated by AI, exact schedules, and entry/exit criteria.

When testing lacks a strategic foundation, it results in chronic inconsistency across sprints, releases, and teams. One sprint might focus heavily on UI automation, while the next shifts to manual exploratory testing with no coherent focus on risk. Without a strategy, every sprint reinvents the testing process, creating a pure illusion of progress. This leads to duplicated efforts, overlooked non-functional requirements — such as performance, security, and accessibility — and a steady accumulation of defects in production.”

Rigid plans become outdated by mid-sprint due to shifting requirements, leaving testers to either ignore the plan entirely or waste valuable time constantly updating it. Illusion that a comprehensive plan exists, but it no longer reflects the project reality.

Illusion signals in Agile testing should be highlighted as extremely dangerous:

- Teams shows test sets that display productivity, but they do not correlate strongly with actual software reliability or user satisfaction:

- High automation percentage or code coverage numbers (e.g., “We have 85% coverage!”) while critical business scenarios, edge cases, or non-functional risks remain untested.

- Running thousands of automated regression tests that pass reliably — but production bugs still escape because the suite focuses on happy paths and ignores exploratory testing or real-world variability.

- Relying on velocity/sprint burndown as a proxy for quality, ignoring escaped defects or user feedback.

- Using plans as short-lived tactical tools (not artifacts for audit).

Testing is not about us just taking something and testing it.

Also, the strategy is that when we try to focus on testing, we, of course, try to look for the fastest solution, because we are absolutely pressed by the deadlines that the client, management, in general, the market sets for us, and the number of sprints during which release magic should happen, right? So this encourages conducting a lot of testing from the point of view of testing isolation.

It is worth noting that the test strategy highlights the problem of isolation. In truth, isolation is very dangerous in software testing, as it creates the illusion of control. Isolation proves that a function exists without ever proving that the product actually works. One question — one answer: If your test checks only one hypothesis: Does this API request work? — you lose! Testing is about finding information about the level of quality, not just testing hypotheses. The use of automation and AI in isolation often fails at this point as well.

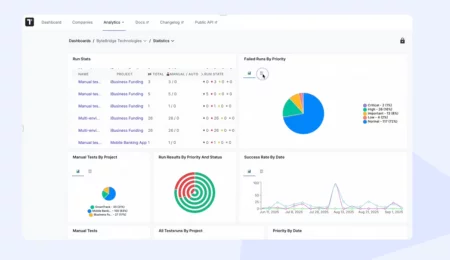

Green dashboard trap of automated test reports

Next, we will discuss one of the most dangerous and widespread pitfalls in modern software quality practices — an illusion that an automated test suite consistently passes. It is particularly common in dominant CI/CD environments (everything shows green on dashboards, reports, and build status), leading teams to believe the software is stable, low-risk, and ready to release, while serious defects still escape to production, users complain, or outages occur.

Yeah, the main problem is isolating automation from the overall vision. You have hundreds of atomic tests and green reports to tell the client that everything is fine, but this is wrong. Automated tests only check what they’ve been explicitly told to check. Functionality may work fine, yet the product fails to meet the actual requirements of the end users.

Another critical factor for successful test automation is testability, as part of the test design. For example, some features of your product may be designed in a way that makes them difficult to automate. Ultimately, testing return on investment only when the product’s architecture is intentionally structured for ease of testability. By prioritizing this, the team expands the possibilities for automation — delivering real business value rather than just Closed Tickets. The test strategy must be discussed with the development team from the beginning to anticipate architectural roadblocks and suggest alternative approaches before they become technical debt. Like choosing the specific automation before knowing the challenge. Managing test flakiness is here too; we won’t be diving into it today. Deep-dive articles on the subject: Overcome Flaky Tests: Straggle Flakiness in Your Test Framework

If your team is trapped in a ‘green world’ while bugs continue to escape into production, your priority must be to audit the test suite and refactor it against actual risks. It often points to an overloaded suite of low-value, outdated automation that provides a false sense of security, increasing the pressure to build and release faster. Do not be afraid to ask questions about what a specific task the particular autotest solves.

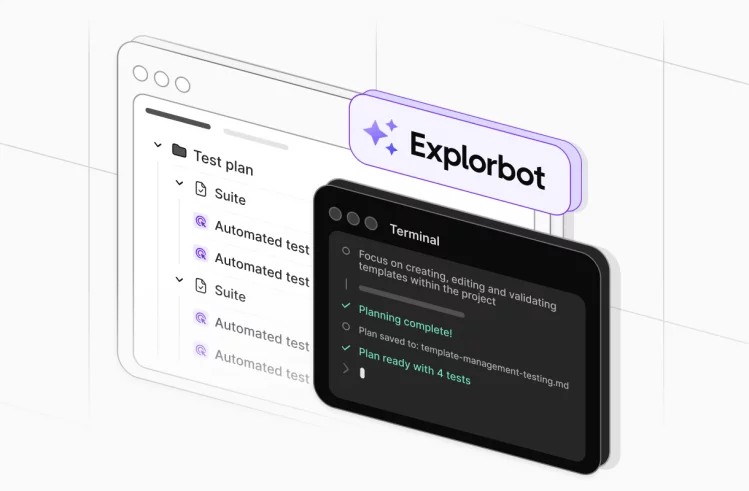

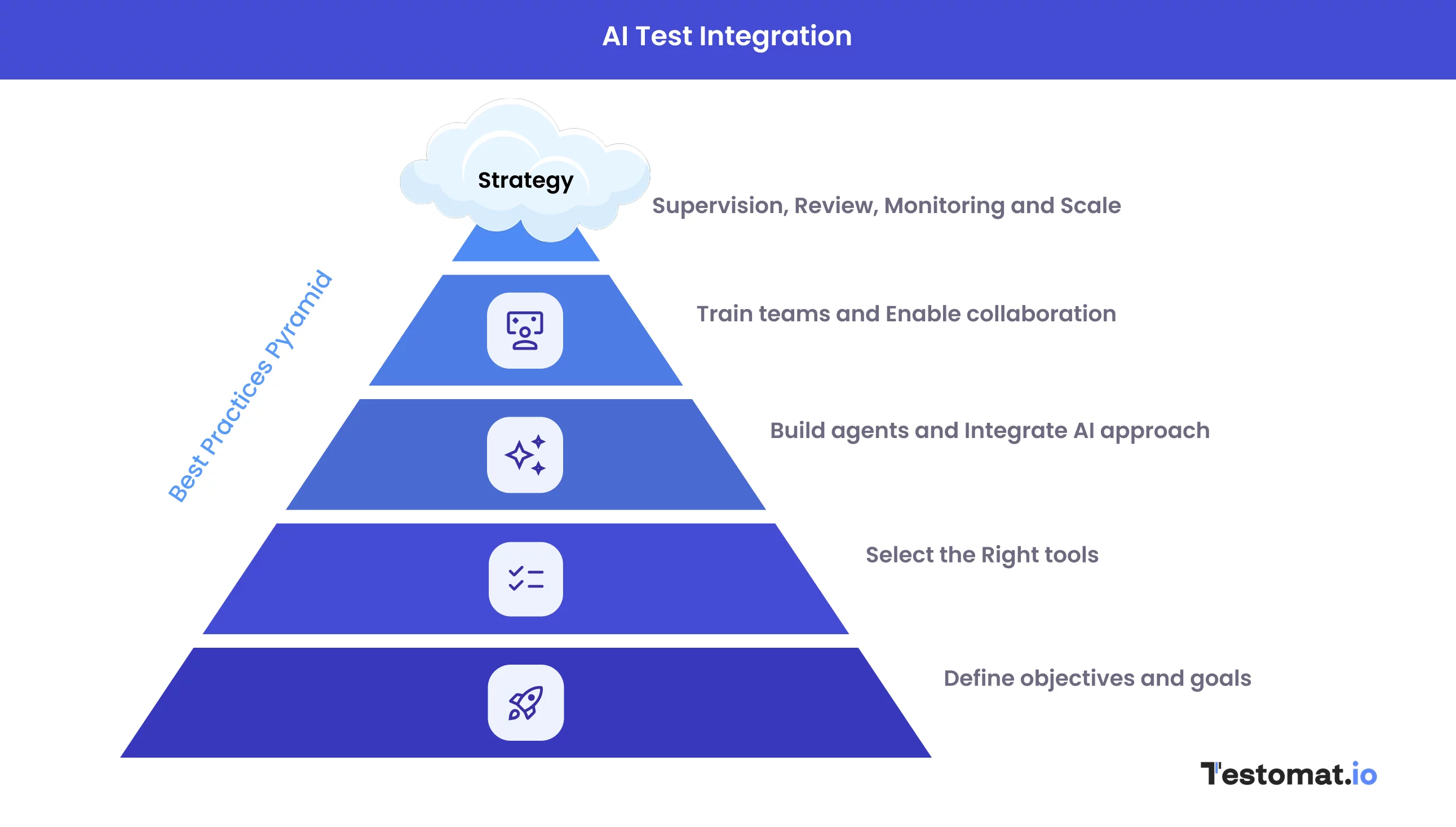

AI & Automation in test Strategy implementation

Traditional test strategies focused on manual and scripted test automation approaches with fixed pyramids; today’s strategies treat

AI-augmented automation as a foundational layer in the testing pyramid.

The new evolution distinguishes strict roles now in the balance of human and AI collaboration. QA engineers become quality intelligence specialists who oversee AI outputs. You can easily use AI as your architectural partner to link Strategy (Vision) and Plan (Logistics) adjust based on real-time feedback.

Implementation of AI in test strategy handles reduce time consuming in repetitive tasks; humans focus on strategy, exploratory, ethics, complex risks.

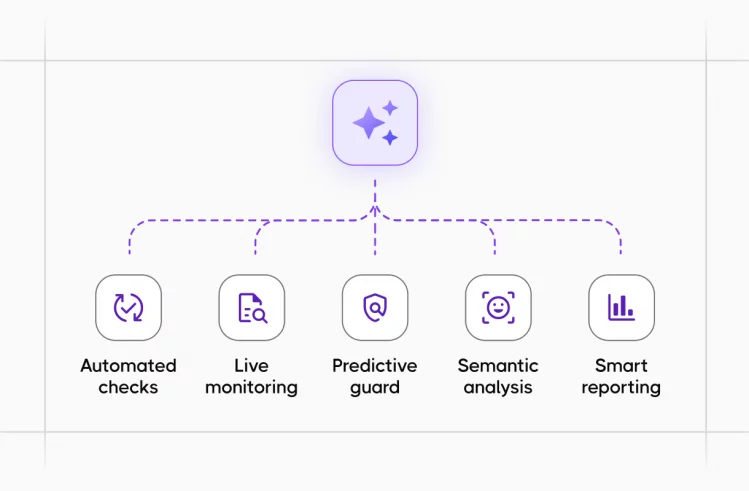

Integrating AI into your test strategy automates time-consuming, repetitive tasks, allowing human testers to shift their focus toward high-value activities, for instance, tracking strategy, exploratory testing, ethics, and complex risk assessment.

GenAI generates test cases and test scripts from natural language requirements, user stories, or even screenshots. It creates synthetic non-linear test data that mimics real-world noise, suggests fixes for broken locators (self-healing), and optimizes suites by removing redundancy. Test strategies now include targets for handling of regression + exploratory discovery.

Use AI to pilot the system’s units, their seams or pain points, which are the most common places for failures and recognize patterns to find a gap. It answers us: What did we miss? And often helps find a solution to our problem.

Feed your requirements into an AI to check for Testability. Ask: “Is this requirement verifiable? What data is missing to make this testable?”

With widespread use of AI assistants (Copilot, etc.), strategies now mandate validation of hallucinations, security flaws, code, edge cases and other artifacts produced by AI.

How to eat an elephant: decomposition and After-Action Review

From a strategic thinking perspective, it is essential to decompose and break down a problem into smaller parts. Then you can see the problems, approach them in stages, solve them step by step, and generally see more factors.

When something is huge, essentially, it is tempting to simplify everything. Of course, it should be a part of the strategy.

- Decomposition: We cut the “big elephant” into parts, but often forget how these parts interact. Here, it is important to remember the system as a whole.

- After-Action Review: Strategy cannot be static. Ask yourself the question: Is my approach working? And you need to do this not only after the incident, but also during releases.

Why test strategy matter?

A testing strategy is a way of structuring critical information that empowers stakeholders to make informed, data-driven decisions.

Such different questions for stakeholders, as stakeholders can be at different levels and have different needs, in particular, they might be team members (Project Owner, Project Manager), the end customer or our clients. In fact, it gives a deeper understanding of the product and helps decide whether to release or invest further; we need time and the right conditions. To gather the necessary insights, recommended to answer the following questions:

— Test strategy turns “let’s test something” into a focused search for decision-making information.

— Test strategy helps guide the development schedule.

— A good strategy protects from testing in isolation and the illusion of control.

— A good test strategy answers the following questions: What is the current level of quality, and are we ready to proceed (Go/no Go)?

— Optimising only for “what is easy to test” can damage product value.

— Testability is a design responsibility, not just a testing problem.

— Automation is a part of the test strategy, not a replacement for it.

— Any practical test strategy must take into account time and constraints.

By utilizing targeted questions, you can curate a list for your specific project — going beyond standard templates to uncover unique project insights. More than one Answer; More than one question.

In addition, it is worth highlighting the insights of Wayne Roseberry

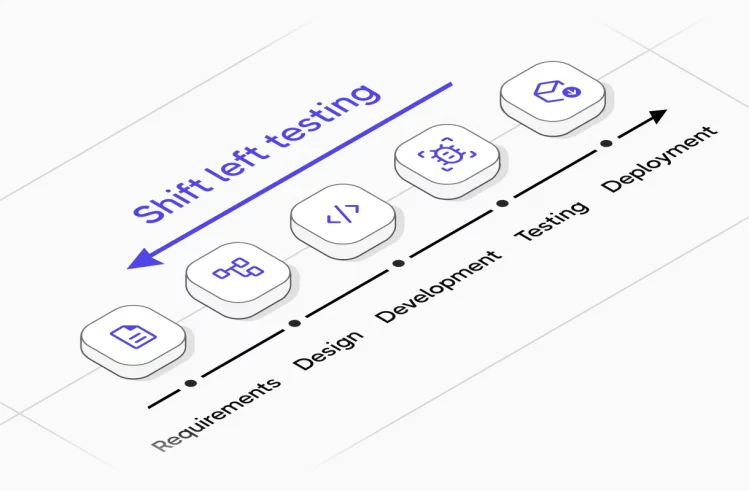

Moving beyond the testing phase — a solid testing strategy does more than just dictate a testing schedule or a timeline and all conditions are created; it guides the entire development lifecycle, not only testing phase.

For QA manager | How to sell strategy to customer and team?

The most common question: How can you justify spending 16 hours writing a strategy when you could be writing a bunch of autotests in that time?

Testing is not just a process. Testing provides the client with information on the basis of which he decides: will the product make money, or can it even be released to the market? Perhaps building a strategy will show that half of your activities are unnecessary, which save money.

The document definition scares everyone: developers, managers, and even QA itself. People want to write code and tests, not executive summaries in English. Literally, within a risk management test strategy is an insurance, which guarantees that your testing will not crumble in a week and is worth its cost. Strategy is a preemptive strike against risks. It collects what could stop our releases.

A trick from Yevgeny:

Don’t tell the team that you are writing a strategy right now 😏

→ Start by discussing the problem.

→ Collect the risks that the team will voice.

→ Discuss how to mitigate these risks.

💡 This is the basis of your strategy!

Then you simply record these agreements as an action plan. And sell the customer not a piece of paper, but a case study of a specific dangerous area that will save them money.

When to review strategy?

❌ Strategy cannot be static. It is necessary to monitor whether the strategy has its deviations and degradations. Even when the testing process seems smooth, it is vital to conduct a retrospective and think about what can be improved. What went well, and where did we miss? These guiding review questions help you audit the strategy and refine the approach.

— When did we actually realize whether our test strategy worked – before release, during an incident, or only after?

— What signals did we miss that should have told us to change the strategy earlier?

— Did our strategy focus on the most important risks, or only on what was easiest to test?

— What did our tests in isolation fail to tell us about the behaviour of the whole system?

— Did we collect enough information about product quality to support a clear Go / No Go decision?

— Where did testability (design, architecture, data) help us and where did it block us?

— Who in the team felt responsible for challenging the current strategy or saying that our decomposition was leading us in the wrong direction?

Ultimately, this framework is designed to provide a definitive answer to the critical question highlighted above: Is the product, in its current state, ready for release into production?

If you do not ask yourself the question: Is my strategy working?, then you are simply using a mediocre template that does not bring any benefits.

Feel free implement Quality Gates at every stage, level, and testing approach; it creates a structured flow of data. At each gate, you analyze the gathered information to determine how it contributes to your overall understanding of the product’s quality.

Golden rule: Ideally, time for After-Action Review (analysis after actions) and work on risks should be built into your contract.

The best teams avoid paralysis by starting small: draft a minimal viable strategy → apply it to the next release’s plan → retrospect and iterate. Treat it as an ongoing process: Review/Refresh strategy quarterly as the strategy matures.

What’s up?

We’ve learned that strategy is primarily about communication and understanding the system. The lack of well-tuned processes and strategies leads to companies incurring millions in losses. Strategy is always about risk and how to deal with them proactively. Testing strategy should not be a siloed phase at the end; it must be woven throughout the software development life cycle (SDLC) from start to finish. The strategy only becomes truly valuable after you’ve run a handful of real Test Plans and learned what actually works.